Table of Contents

Time for AI to cross the human performance range in Go

Published 15 October, 2020; last updated 08 March, 2021

Progress in computer Go performance took:

- 0-19 years to go from the first attempt to playing at human beginner level (<1987)

- >30 years to go from human beginner level to superhuman level (<1987-2017)

- 3 years to go from superhuman level to the the current highest performance (2017-2020)

Details

Human performance milestones

Human go ratings range from 30 kyu (beginner), through 7 dan to at least 9 professional dan.1 These ratings go downwards through kyu levels, then upward through dan levels, then upward through professional dan levels. The top ratings seem to be closer together than the lower ones, though there are apparently multiple systems which vary)2

AI achievement of human milestones

Earliest attempt

Wikipedia says the first Go program was written in 1968.3 We do not know how well it performed.

Beginner level

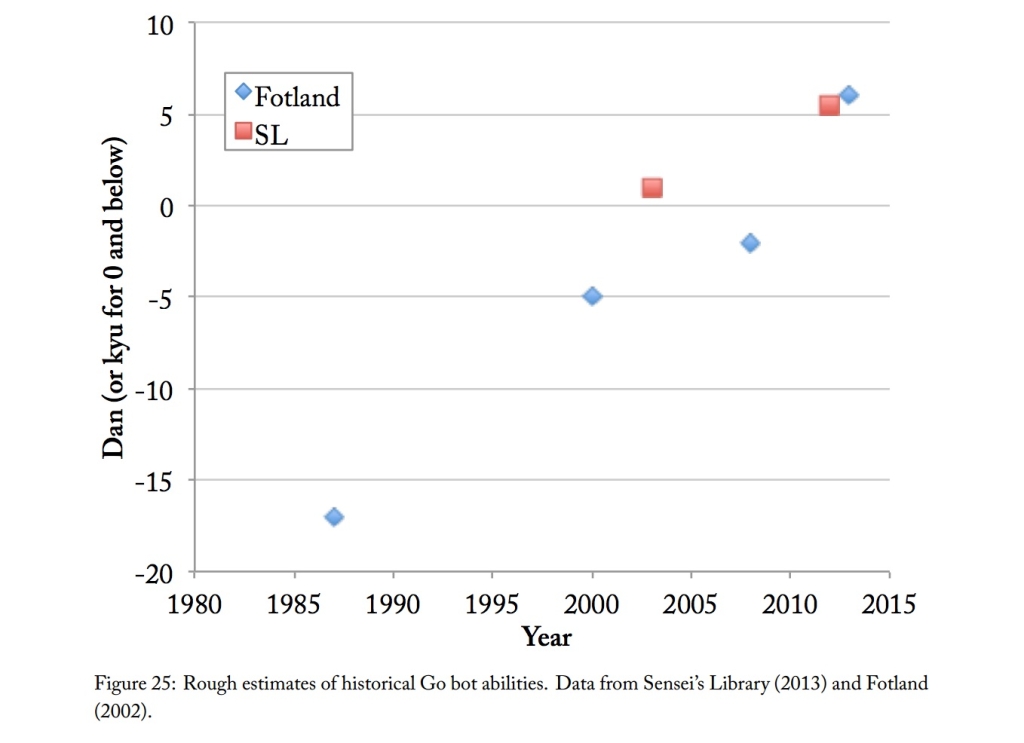

We have not investigated early Go performance in depth. Figure 1 includes informed guesses about early performance by David Fotland, author of successful Go program, The Many Faces of Go, and Sensei’s Library, a Go wiki.4 Fotland says that early data on AI Go performance is poor, since bots did not play in tournaments, so were not rated.

This suggests that by 1987 Go bots were performing better than human beginners. We do not have evidence to pin down the date of human beginner level AI better, but have also not investigated thoroughly (there appears to be more evidence).

Superhuman level

In May 2017 AlphaGo beat the top ranked Go player in the world.5 This does not imply that AlphaGo was overall better, but a new version in October could beat the May version in 89 games out of 1006, suggesting that if in May it would have beaten Ke Jie in more than 11% of games, the new version would beat Ke Jie more than half the time, i.e. perform better than the best human player. Thus 2017 seems like a reasonable date for top human-level play.

Times for AI to cross human-relative ranges

Given the above dates, we have:

| Range | Start | End | Duration (years) |

| First attempt to beginner level | 1968 | <1987 | <19 |

| Beginner to superhuman | <1987 | 2017 | >30 |

| Above superhuman | 2017 | >2020 | >3 |

Primary author: Katja Grace

Notes

- “Go Ranks and Ratings.” In Wikipedia, June 20, 2020. https://en.wikipedia.org/w/index.php?title=Go_ranks_and_ratings&oldid=963489455.

- See table:

“Go Ranks and Ratings.” In Wikipedia, June 20, 2020. https://en.wikipedia.org/w/index.php?title=Go_ranks_and_ratings&oldid=963489455. - “The first Go program was written by Albert Lindsey Zobrist in 1968 as part of his thesis on pattern recognition.[11] It introduced an influence function to estimate territory and Zobrist hashing to detect ko.”

- “Figure 25 shows estimates from two

sources: David Fotland—author of The Many Faces of Go, an Olympiad-winning Go program—and Sensei’s Library, a collaborative Go wiki. David Fotland warns that the data from before bots played on KGS is poor, as programs tended not to play in human tournaments and so failed to get ratings.”Grace, Katja. “Algorithmic Progress in Six Domains.” Berkeley, CA: Machine Intelligence Research Institute, 2013.

- “In May 2017, AlphaGo beat Ke Jie, who at the time was ranked top in the world,[27][28] in a three-game match during the Future of Go Summit.[29]“

“Computer Go.” In Wikipedia, July 27, 2020. https://en.wikipedia.org/w/index.php?title=Computer_Go&oldid=969736537 - “In October 2017, DeepMind revealed a new version of AlphaGo, trained only through self play, that had surpassed all previous versions, beating the Ke Jie version in 89 out of 100 games.[30]“

“Computer Go.” In Wikipedia, July 27, 2020. https://en.wikipedia.org/w/index.php?title=Computer_Go&oldid=969736537